Lab Setup for Microsoft Exam 70-412

In this article we explore the creation of a lab Setup for Microsoft Exam 70-412 so you can test the topics of the exam.The topics for Microsoft exam 70-412 Configuring Advanced Windows Server 2012 Services (a requirement for the MCSA certification) can be found here: https://docs.microsoft.com/en-us/learn/certifications/exams/70-412

- Configure and Manage High Availability

- NLB, Failover Clustering

- Configure File and Storage Solutions

- BranchCache, DAC, iSCSI, DeDup

- Implement Business Continuity and Disaster Recovery

- Azure backup, bcdedit, site-level fault tolerance

- Configure Network Services

- DHCP superscopes, DHCP High Availability, DNSSEC, IPAM

- Configure the Active Directory Infrastructure

- UPN Suffix, Forest Trusts, SID Filter, Site Links,

- Configure Access and Information Protection Solutions

- AD FS, claims-based authentication, Certificates, PKI, AD RMS

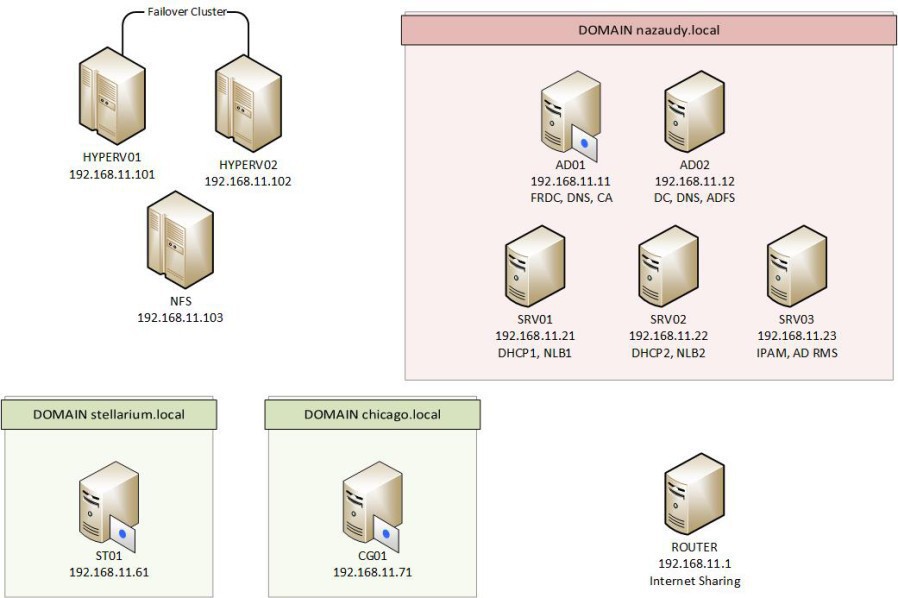

These are the servers I configured using VMware Workstation for this exam, very similar to the ones used for exam lab 70-411

To create your VMs so they can run Hyper-V, choose this operating system at the time of creating them (Hyper-V unsupported). The problem with this is that VM Workstation won't allow you to install the VMware tools on the "unsupported" VM, and the option VM >> Update VMware Tools... will be greyed out. To solve this problem, insert the updated tools disc on another "supported" VM, shared the CD-Rom drive and install the VMware tools on the "supported" Hyper-V via the network

1. Configure and Manage High Availability

Network Load Balancing (NLB) is a Windows Server Feature that lets you make a group of servers appear as one server to external clients. A NLB Cluster improves both availability and scalability of the system they support, it could be RDP server farms, web server farms, VPN servers farm, etc. Because each request is address only by a single server, NLB Cluster supports only stateless applications, meaning that changes made to one server are not copied to other servers

Install-WindowsFeature NLB -IncludeManagementTools

## Note that Add-WindowsFeature is an alias of InstallWindowsFeauture

## and Remove-WindowsFeature is an alias of UninstallWindowsFeature

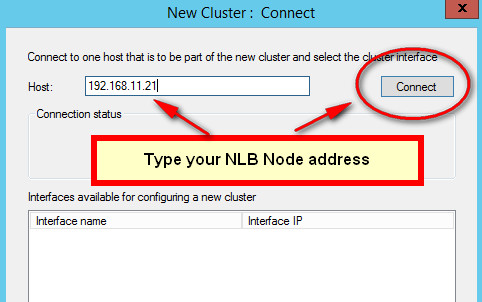

Node that the first time you open the Network Load Balancing Clusters console and choose to "Create a New Cluster", you need to enter the IP address of your NLB node. There would be nothing under the field "Interfaces available for configuring a new cluster" unless you enter the IP of your host (probably the one of the server you're working on) an choose on "Connect"

Nlbmgr ; tool to manage a NLB Cluster; note the that Management Tools for every Feature are only installed in you add the -Include... switch, otherwise you won't find them

The Virtual IP address is shared by all members of the NLB Cluster. Port rules are the most important part of an NLB cluster's configuration, as they define which traffic will be load balanced. Only one port rule can ever apply to an incoming packet. At the time of creating custom port rules, these are the setting for the Filtering Mode:

- Multiple Host

- Affinity-None; it should really be called "any", because each client is directed for the first time to any node in the cluster depending on the existing loads of the node: "Hey chaps, you can take the new guy coming in asking for stuff?". Thereafter, the client will always connect to the same node

- Affinity-Single; it directs all traffic to the node with the highest priority value. If on the first connection Client1 connects to Host1, it will keep connecting to the same host in the future. The advantage of this setting is that it allows user state information to be kept locally on each node, ready to the user to grab on the next session

- Affinity-Network; each node is responsible for the connection of a give /24 network address

- The Time out setting is useful for web store-front, so a customer might experience the benefit of a zero timeout and having the items of their baskets when they reconnect, rather than an empty basket if they are redirected to a new host

- Single Host; all queries are answered by just a single host (there is no load balancing). This mode is normally use when troubleshooting, doing upgrade, backups, etc

Load Weight setting allows you to assign a greater or smaller share of the network traffic directed at the farm

Host Priority determines which server in an NLB cluster receives traffic that is not covered by a port rule, while Handling Priority is a custom server priority value used for traffic covered by a port rule but assigned Single Host filtering mode

A rolling upgrade allows you to leave the NLB cluster online while you are upgrading servers; use the drainstop function so that each node is taken offline ensuring that existing connection to that host are terminated gracefully. For NLB to work all nodes have to be on the same subnet, all nics must be either multicast or unicast, and they must use static IP addresses

An NLB cluster can have only 32 hosts, being the 1st host added the priority 1, the 2nd priority 2, etc

- Unicast, each host share the same MAC address. Obviously, don't select this mode is your hosts only have one network card because you'll lose communication

- Multicast, each one of the node keeps their own MAC address, and the cluster MAC address is share among all hosts

- IGMP multicast, prevent switch flooding as long as network switches are compatible with IGMP

Failover Clustering is a feature that helps ensure selected services or applications (databases, mail servers, file and print servers, hyper-v, etc) remain available even if a server hosting them fails. Unlike NLB, Failover Clustering is normally used to provide high availability for data that is frequently updated by clients. In a Failover Cluster the nodes store data only in the volumes that are located on Cluster Shared Volumes (CSVs) Storage, and note that all hardware components of a Failover Cluster must meet the requirements for the certified Windows Server 2012 or 2012 R2 logo. In a Failover Cluster, the nodes need to be running either Standard of Datacenter edition of Windows Server 2012 R2. Nodes can either be on AD or they can be "detached clusters", where they only exist as records on DNS. Microsoft recommends not using AD detached clusters in scenarios where Kerberos authentication is a requirement, in other words: if you can use AD, do it!

The state of Quorum is reached when the majority of the nodes can communicate to one another and are functional; once Quorum state is reached the Failover Cluster is allowed to run. To determine Quorum the nodes cast their votes, and this sate can be managed dynamically or you can configure how to reach Quorum, for example if you want the Cluster to provide services when only 2 out of 6 servers are online, you can configure that on the Cluster Quorum Settings

A witness is a shared disk accessible by all nodes that contains a copy of the failover cluster database, this disk is used as a 'tiebreaker' when the number of nodes is even

| Mode | Voting System |

| Node Majority | Allows each node to vote |

| Node + Disk Majority | Allows each node + Disk witness to vote |

| Node + FS Majority | Allows each node + FS witness to vote |

| No Majority: Disk only | Allows one node with a specified disk to have quorum |

Storage Pools; from Physical disks you create Storage pools >> Virtual Disk >> and finally you create volume out of the Virtual Disk that are presented to the cluster

CSVs (Cluster Shared Volumes) are formatted with NTFS, but to distinguish them from normal NTFS shared, the Windows Server displays them as formatted with CSVFS (Cluster Shared Volume File System), the Scale-Out File type of cluster only uses CSVs

Cluster Aware Updating (CAU) automates the process of upgrading nodes on the cluster when they need Windows Updates. Unlike NLB, there is no way to upgrade/migrate the OS of a cluster unless you start from the beginning... unless of course your start the More Actions >> Migrate Roles utility, which will allow you to migrate the role to an updated OS node part of different cluster

Add-CauClusterRole

#schedule the installation of windows upates on the nodes by adding the CAU role to the cluster

Invoke-CauScan

#performs a scan of cluster nodes and force them to contact WSUS for updates

Configuring Roles; to prepare a service or application for high availability in a failover cluster you first have to install that service or application on all the nodes by starting the "Configure Roles" wizard

Scale-Out File Servers (SoFS) are designed for SQL database applications (an application data folders too) that handle very heavy workloads, these sorts of clusters use only CVS storage and they are not compatible with BranchCache, Deduplication, DFS Namespaces and Replication or File Server Resource Manager; if any of these service are present, then use File Server as the role if your intention is to host a SQL database

Active Directory detached cluster; is a cluster in which members have not join a domain, useful if you want to prevent the havoc of somebody deleting the cluster name on AD in a domain-mode cluster. They are created by using "-AdministrativeAccessPoint dns" in PS

New-Cluster SQL_Cluster -Node -Node01,Node02 -StaticAddres 10.10.10.190 -NoStorage -AdministrativeAccessPoint Dns

The heartbeats of a Failover Cluster are configure using PowerShell, not in the GUI

Roles Startup Priorities ; its purpose is to ensure that the most critical roles have priority access to resources

Node Drain basically puts the node on maintenance mode. Drain on Shutdown is only available in the R2 version (not the plain ones) of Window Server 2012, and what is does is to migrates all workloads outside the cluster if the cluster node is shutdown without being put into maintenance mode first

VM Monitoring Service; this is for Hyper-V, and provides a restart of the VM in case on failure. For it to work they following needs to comply:

- Both Hyper-V host and VM must be running Windows Server 2012 plain or R2

- The VM must belong to a domain that the host trusts

- A firewall rule on the VM Monitoring Group must be enabled on the guest

For Live Migrations outside of clustered environments you need to choose an authentication protocol on both hosts servers, the protocol could either be CredSSP (Credential Security Support Provider) which requires no configuration or Kerberos which does requires configuration, where you need to adjust the properties of the Delegation tab in both source and destination hosts in AD

Don't forget to enable the Processor Compatibility settings before migration, similar to an EVC Cluster in VMware

Network Health Connection configures a VM so that it is automatically migrated to a new host is a external network (the LAN configured for protection) is lost

Use the More Actions >> Copy Cluster Role to move the cluster from a 2008 version to a 2012 version, effectively upgrading it

To look for the PS jungle commands of Windows, use the gcm command, on the example below it searches for any PS command that contains the word "cluster"

gcm | where name -like "*cluster*"

Failover across sites makes sense when you're going from Site1 (10.10.10.0) to Site2 (192.168.0.0) and they are on different subnets

The Clusters OU; remember to create an OU to keep in there your Hyper-V hosts and the name of the cluster, and give the cluster virtual name permission on the security tabs to create objects, this is particularly important if you want to create an Hyper-V Replication Broker role

2. Configure File and Storage Solutions

BranchCache uses file caching to reduce network traffic across WAN links. In Hosted Cache Mode a server in the branch is configured as a cached server, and clients at the branch first check this server for files before heading to the main office if the files are not there; once the files are retrieved, they are subsequentially stored in the Cache Server. In Distributed Cached Mode, the contents are kept in the client's cached location; clients will check their current subnet for files before contacting main office when files are not found in their own cache or their peer cache

To configure the server, just add the BranchCache feature. To configure the clients enable the 2 x GPO settings found under under Computer Configuration >> Policies >> Administrative Templates >> Network >> BranchCache

Dynamic Access Control (DAC) relies on attributes and metadata (called claims) to give access to files, for example you can give access to a file marked with the attribute TOP_SECRET to users on AD who also have the attribute level of TOP_SECRET. DAC needs the Domain Functional Level to be Windows Server 2012 or higher

Unlike NTFS, DAC allows you to reduce the number of groups you would need to manage permissions. Instead of ACLs based on NTFS, DAC allows you to create access rules that are more flexible and correlates to the business needs of your organisation. To configure DAC, do as follows:

- Defining user and device claim types

- Enabling Kerberos support for claims-based access control

- Configure file classification, enabling or creating selected resource properties

- Adding resource properties to a resource property list

- Updating AD file and folder objects

- Classifying files and folders

- Finally, configure access policies that includes claims

- Deploy central policies to file servers

Once you have setup the claim rules and policies in AD Administrative Center, visit the GPO that will apply to the security principals and add the rule into Computer Configuration >> Policies >> Security Settings >> File >> Central Access Plicy

File Classification Infrastructure (FCI); integrates with DAC and it uses the "Classification" tab that is enable in the properties of a folder/file when a DAC GPO applies to the file server hosting the folders/files. Once a classification rule is set, you can create a File Management Tasks to apply that rule. Inside the FRSM Console, Classification Rules have to run all at once, while you can run individually File Management Tasks if needed

iSCSI; remember that you need to add storage to a target before you can configure the initiator to connect to that target

Data Deduplication is a component of the File and Storage Services role that reduces storage footprints and increases storage capacity without reducing performance. DeDup cannot be applied to volume marked as "system" or those formatted with ReFS

Storage Tiers allows you to configure Windows Server 2012 storage spaces so that you can create virtual disk. The Storage Pools that you create must use fixed provisioning

- First visit Storage Pools in Server Manager and create a volume out of the Primordial Disks

- Follow the Volume Wizard that starts automagically once you have created a storage pool

- Then go to iSCSI and create a NFS volume out of that volume

3. Implement Business Continuity and Disaster Recovery

Windows Server Backup feature is great, but so is Azure backup. The Volume Shadow Copy Service (VSS) allows this tool to backup files, and the backup bits are set when the option "VSS Full Backup" is in used. Remember that backing up to a remote share folder overwrites the previous backup

When you select Faster Backup Performance, backups are done in rows of 14 differentials backup, meaning that they are faster yes, but it will take longer to restore

Backup Operators can not just backup and restore files but also shutdown the system, logon locally and access the system from the network

Windows Azure Backup ;create an account here and test it https://azure.microsoft.com/en-gb/account/ If you are creating a self-sign certificate to authenticate your machine against Azure, you need to install on your machine Windows SDK an run the makecert tool to create a self-sign certificate. For Azure, export into there the public certificate but obviously without the private key! Also from Azure, download the Window Server and System Center Data Protection Manager agent, and install it on the server running Windows Server Backup

wbadmin ;this is the Windows Backup tool, to perform a bare metal system recovery, use:

wbadmin start sysrecovery

Advanced Boot Menu Options; you call this menu press F8 at boot up, configure msconfig if in Windows or turn off with the command shutdown /r /o

startrep ;use it if the registry has become corrupted

cd \sources\recovery

startrep

bcdboot ;use to copy critical boot files to the system partition and create a new system BCD partition based on that

bootrec ;use it with the /fixboot and /fixmbr switches, other swtiches to use are /ScanOs and /RebuildBcd

bootrec /scanos

bootrec /fixboot

bootrec /fixmbr

bootrec /rebuilbcd

bcdedit ;use it to modify the boot entries

shutdown /r /o /t 0

#r for reboot

#o to start in Recovery Mode, and time=0 for doing all of that now

Shift + Restart while on GUI will also makes you reboot on Recovery Mode

2 x consecutive power shutdowns (powercuts) will make it to go to Win RE

bcdedit safeboot minimal

#while on GUI, will make you boot to Win RE

bcedit /deletevalue safeboot

#do that otherwise it will keep booting on Safe Mode

Site-level Fault Tolerance is the replication tool within Hyper-V, that allows you to replicate VMs to other servers or storages

Hyper-V Replica Failover, use the facility to test the failover... at your own risk!

Global Update Manager is the component responsible for managing cluster database updates, informing all the other nodes of the changes before updating the database (these changes could be a host is offline, new storage added ,etc). With PS you can configure these options:

- 0 = All write and all local read; changes must be acknowledge by all hosts before it is committed to the database. This is the default setting for a Failover Cluster (expect Hyper-V)

- 1 = Majority (read and write); changes are acknowledge by only the majority of hosts before being written to the database. This is the default for a Failover Cluster running Hyper-V

- 2 = Majority write and Local read; same as above, but the database reads occurs at the local node meaning that the data may be stale

Microsoft recommends that you do not use node 1 or 2 when you need to ensure that the cluster database is consistent

Recover multi-sites failover clusters; in situations when you encounter a "split-brain" cluster, where due to failure the cluster is split in two, you'd need to manually restart one partition with the cluster.exe /pq switch, and the others with the /fq switch which will be deemed as authoritative for the cluster

DHCP Superscopes ;they are useful when you have more than one network segment to server. A superscope allows you to create a second split-over scope that will be used to provide addresses when the first scope no longer has any addresses available to lease them out to clients. They are also useful when you have to serve different VLANs, each with their own network segment. Note that each scope will always provides its own gateway on the same subnet, and therefore routing is needed is you wish traffic to occur in between scopes

To create a superscope, you first create all the scopes that you need, and then follow the wizard to create a superscope, and select all those previously created scopes

Multiscopes ;used them to serve IPs on the multicast range 224.0.0.0 to 239.255.255.255. The protocol use by clients to communicate with a multicast scope is not DCHP but Multicast Address Dynamic Client Allocation Protocol (MADCAP) instead. An example of a system that uses multicast is WDS

DHCPv6 ;you can use a DHCP server to provide IPv6 addresses to clients but not for the gateway, the IPv6 given by DHCP are not routable unless some configuration is done outside the DHCP server

Stateful addressing in Windows clients is managed by the following 2 IPv6 flags, configured locally on each machine or set by a neighbouring IPv6 router:

- M-flag; indicates that clients should obtain an IPv6 address through the DHCPv6 server

- O-flag; indicates that clients should use DHCPv6 to obtain additional configuration options such as the IPv6 of a DNS server

DHCP Failover users TCP 647 to communicate between servers

High Availability; DHCP failover allows you to have have 2 x DHCPs with the same subnets & scopes, so that when one DHCP server fails the other one takes over. They can work in either hot-standby or load-sharing modes. Another way of providing high availability for DHCP is to configure split scopes, where one server serves 80% of a scope and the other one 20% or so, this method is well use in OS prior to Windows Server 2012

DHCP Name Protection protects the names registered on DNS for being overwritten by devices running non-windows system that may have the same name. This system uses a record known as a Dynamic Host Configuration Identifier (DHCI) to track which host originally requested a specific name

DNNSEC allows you to provide clients with a a way of checking the integrity of the results returned when they perform a query against a DNS server, it works by using digital certificates to cryptographically sign the information stored in DNS names. Implementing DNSSEC creates the following cryptographic keys:

- Trust anchor

- Key Signing Key (KSK)

- Zone Signing Key (ZSK)

When you sign a DNS zone, a number of special resource records are created, these include:

- Resource Record Signature (RRSIG)

- DNSKEY record

- Next Secure (NSEC/NSEC3) record

On DNS servers that only forward, you need to import the "keyset" certificate under the Trust Point >> Import DNSKEY >> navigate to the other DNS server \\ad01.nazaudy.local\c$\system32\dns\ and import the keysec-set.nazaudy.local file

Resolve-DnsName www.nazaudy.com -server SRV01 -Dnssecok

#the "DNS Sec Ok" will check if DnsSec if used or not

Do as above but with the www.irs.gov domain to test

Clear-DnsClientCache

To enable clients to query for DNSSec records, you need to visit the Domain GPO >> Computer Configuration >> Policies >> Windows Settings >> Name Resolution Policy and enter the domain under "Suffix" for which you want the clients to always require DNSSEc

DNS Socket Pool ;DNS queries are predictable (the source port is UDP 53), so a hacker can potentially grab a query and redirect it to a malicious website. What DNS Socket Pool does is to minimise the chances of successful cache-tampering and DNS spoofing attacks by randomizing the source port used to issue DNS queries to remote DNS servers, thus reducing predictability. By default, DNS socket pool users 2500 for both IPv4 and IPv6, though you can adjust the number of ports as show below (note that that will also consumes more memory)

dnscmd /info /socketpoolsize

#tells you the number of ports on the socket

dnscmd /config /sockepoolsize 6000

#the above configures 6000 ports to use by DNS Pool, 10k is the max

DNS Cache Locking ;once you configure it you can control if and when records stored in the DNS server cache can be overwritten. Since 2008 R2 the DNS cache can be locked, preventing an attacker from injecting malicious data on it

dnscmd /info /cachelockingpercent

#it should show you 100% which is the default value

net stop dns

net start dns

#restart the service if you do any changes to the cache

If your organisation has DNS server facing the public, you might consider disabling recursion to stop denial-of-services attacks, however that will also disables DNS forwarding. Note that you cannot look at the Log DNS file while the service is running, you need to stop it first

When Netmask Ordering is configured, the DNS server returns the client's subnet if that record exist

GlobalNames Zone provide single-label name resolution, allowing single names to be translate to IP addresses, for example using the name "intranet" rather than the FQDN intranet.nazaudy.local; GlobalZone replaces the use of WINS server, and its drawback if that you manually need to create the CNAME entry for the records. GlobalNames Zones are used mostly for legacy apps/clients, quite possibly running Linux. The steps to enable this are:

- Create a zone called "GlobalNames"

- Register the zone with dnscmd (see dnscmd command below)

- Populate it with CNAME records

dnscmd server /config /enableglobalnamessupport 1

GlobalNames Zone is a way to get rid of WINS once and for all

IPAM ;it lets you centralise, view, manage and configure IP addresses of your organisation, it works by discovering your infrastructure servers and importing from them all available IP addresses data. With IPAM you can manage DHCP scopes, create reservations, DNS host records, find free static IP addresses, etc. IPAM does not support management and configuration of non-Microsoft network elements, also note the IPAM cannot be installed on a Domain Controller, DNS or DHCP server, it will give you the "IPAM Provisioning failed" error message. To manage IPAM from another box, you can install the "client" feature from Remote Administrative Tools when running Add/Remove roles (using Server Manager click on Add Server so you add the IPAM database server on the one running the client). The account running IPAM must be a member of:

- Remote Management Users

- winRMRemoteVMMIUsers_ ;allows them to run PowerShell. Add users to this group if you want them to remote access IPAM by using Server Manager

In addition, on the server running IPAM, add members to the following groups if you wish to delegate access:

- IPAM Administrators; view all IPAM data and managed IPAM features

- IPAM ASM Administrators; managed IP address blocks, ranges and addresses

- IPAM IP Audit Administators; view IP address tracking data

- IPAM MSM Administrators; Multi-Server Management administrators can managed DNS and DHCP servers

- IPAM Users; can view info in IPAM but cannot managed or view IP address tracking data

It is better to provision IPAM by GPO, it saves you from altering the registry and creating rules for the firewall,etc, and even though you might have selected GPO deployment, no GPOs will be created unless you run this poweshell command:

Invoke-IpamGpoProvisioning -Domain nazaudy.local -GpoPrefixName IPAM -IpamServerFqdn srv01.nazaudy.local -DelegatedGpoUser nazaudy\admin

That will create the 3 x GPOs "IPAM_DC_NPS", "IPAM_DHCP" and "IPAM_DNS", however none of these GPOs will have any groups under Security Filter, so the last thing you need to do is to create a group called "IPAM_Computers" and add in there the DCs, DHCPs and DNSs servers, remember that the servers need to be rebooted in order for this membership association to be effective. After the reboot, run this on the affected servers to ensure they got the right GPOs under Apply Group Policy Objects

gpresult /r /scope computer

In order to get the green status of the server under the IPAM Console, they need to be marked as "Managed"

When adding the IP addresses subnets, you can put the VLAN they relate to too

5. Configure the Active Directory Infrastructure

Multi-Domain Forests; a single organisation may have multiple domains within the forest for historical or political reasons as well as for security considerations or delegation to branches where domains act as authorisation boundaries

Multi-Forest environment; a single company may also have different forests (if they merge with other company, etc) that need to trust in another in order to share resources. These multi-forests trusts must be established manually

adprep ;we used to run this command before introducing a new OS DC, but for Windows Server 2012 you don't need to do that (it will do it for you) as long as you promote the 2012 DC under an account who is a member of Schema, Domain and Enterprise Admins

UPN Suffixes; this allows the user to logon to their domains using their usernames as if they were email addresses. You configured this setting in the properties of Active Directory Domains and Trusts

Trusting domains contains the resources to which you want to allow access while the trusted domain (the-users-are-trusted) hosts the security principals to which you want to grant access. Trust transitivity allows a trust to extend beyond the original trusting domain

- Two-way trust, also known as bidirectional trust, both sides of the trust act as trusting and trusted domains (or forests)

- One-way incoming trust, is when the local domain is the trusting (resources) and the remote domain is the trusted (accounts), incoming because the resources are here

- One-way outgoing trust, is when the local domain is the trusted (accounts) and the remote domain is the trusting (resources), outgoing because the resources to access are there

External Trusts allows a domain in a forest to trust a specific domain in another forest without any other domain in either forest being included in the relationship. To create an external trust, a user must be a member of the Enterprise Admin group in the domain where the external trust is being configured

Realm trust are those with a non-windows Kerberos realm such as one running a Linux environment

Forest Trusts allows to trust a whole forest to another forest, meaning that all domains on each forest trusts one another, and this trust can be uni-directional or bi-directional. At the time of configuring a forest trust, you can use any of the following authentication scopes:

- Forest-wide authentication; default setting, users in the trusted forest are authenticated for all resources in the local forest, though permissions still need to be given in the local forest!

- Selective authentication; suitable when each forest belongs to a separate company, because with this settings users are not automatically authenticated and you need to configure the specific servers and domain that will be available to users in the trusted forest

Shortcut Trust, this is when you want to speed up authentication between 2 domains in the same forest that are on separate branches, for example you can create a shortcut between branch2.domain.company.lodon.com and branch3.doman.company3.com, saving the authentication from going all the way between parent domains. Shortcuts Trusts happens when you already have an established Forest Trust

Trust use Kerberos V5 authentication by default, though it will revert to use NTLM only if Kerberos V5 is not supported. Configuring trusts requires the following ports to be available:

- Port 387 UDP and UCP, used by LDAP

- Port 445 UDP, used by Microsoft SMB

- Port 88 UDP, used by Kerberos

- Port 139 TCP, used for trust endpoint resolution

SID (Security Identifier) filtering is a security measure that blocks users in a trusted forest or domain from being able to elevate their privileges. SID Filtering works by discarding any SID that does not include the domain SID of the trusting domain, and it is enable by default on Windows Server 2012 and R2. Yes, I know is confusing, but to turn off SID filtering you have to turn on (enable) the SID history

Disabling filtering is equivalent to enabling SIDHistory management

Enabling filtering is equivalent to disabling SIDHistory management

netdom /enablesidhistory:yes

#this is the default, meaning that the filtering is enable because hisotry is disabled

Name Suffix Routing; you can configure this so that authentication requests are routed when you have configured a forest trust

Sites; when properly configured, sites allow AD clients to locate the closest instance of a particular resource, saving them from visiting other sites by a slow connection when what they need is locally present. An AD site represents a location where hosts share a fast local network connection, and they can have more than one IP subnet. It is recommended to rename the Default-First-Site to something more appropriate for your company. Remember that you can associate multiple subnets to a single site, but not multiple sites to a single subnet, in other words a subnet belongs to a site only

Site Links, they specify how replication between one site and the other occur. The default cost of a site link is 100, with the lower cost the lower the overhead for replication, so lower is preferred. Site Links Bridges allow you to create transitive links between site links, and you only need to create them if you have cleared the "Bridge All Site Links" checkbox from the transport protocol in use. When you create a bridge between 2 sites, each one of the ends must have a site in common for the bridge to work, for example, A >> B : B >> C, in this example the bridge between sites A and C goes through site B

SRV records, also known as Locator Records, allow clients to locate resources using DNS queries. Each Domain Controller has a separate _kerberos and _ldap records. Each DC registers its SRV record every 60 minutes, you can trigger the manual SRV record registration by restarting the netlogon service

RODCs; for accounts members of the "Deny replications", when they attempt to logon to a RODC, it passes the authentication request to a writeable DC, normally located on a different, secure site of the RODC. Use the "Password Replication Policy" tab on the account object of the RODC in AD to configure specific site replication, also on this tab you can check which accounts have replicated to a specific RODC by clicking on the "Advanced" button. If a RODC ever gets compromised, just delete the object from AD and you'll be prompted to reset all passwords stored on the missing/lost RODC

dfsdiag; in Windows Server 2012 you can force DFS replication by using this command

repadmin ;tools that allows you to control replication, use the format "repadmin /syncall" to replicate to all DCs the NTDS database and SYSVOL data

repadmin /replsummary

repadmin /showrepl #display info about inbound replication traffic

#To view which users have had their passwords replicated to a RODC, do this:

repadmin /prp view dc01 reveal

SYSVOL; Windows 2000/2003 Server was using FRS (File Replication Services) to replicate data on the SYSVOL volume, while Windows Server 2008 and newer use the more efficient DFS (Distributed File System) to perform replication of the SYSVOL volume. Use the dfsmig.exe utility to upgrade the replication protocol, note that the functional level of the domain must be Windows Server 2008 or higher. To migrate from FRS to DFSR do as follows:

dfrsmig /SetGlobalState 1, 2, 3, etc

dfrsmig /GetMigrationState

dfsdiag /testdcs

#checs Domain Controllers DFSR states

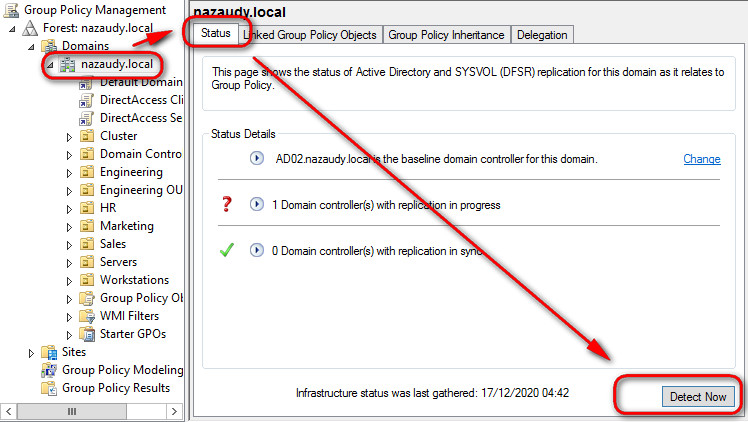

When you open Group Policy Management, click on the domain name "nazaudy.local" and use the change to modify the baseline target DC upon opening GPM; you can also "Detect Now" see any issues with replication

6. Configure Access and Information Protection Solutions

Active Directory Federation Services (AD FS); it allows you to configure federated relationships between your organisation and a partner organisation. AD FS is used when configuring single sing-on between an on-premises AD and a Azure AD instance, allowing a single account to be used with Exchange Online, SharePoint online and Windows InTune. Why Federated Services and not Trust Relationships? Well, trust relationships are very broad (domain/forest A trust domain/forest B) and should only be used in sister companies or in companies that have merged. AD FS is useful for DevOps apps that are claims-aware

- One good thing to do, is to create a Conditional Forwarding between the 2 domains that are going to interact between one another, that will speed up the communications

- Create a GPO on each domain and enable Computer Configuration >> Windows Settings >> security Settings >> Local Policies >> Security Actions >> Network Access: Let Everyone permissions to apply to Anonymous users = Enabled ;meaning that everyone will also be anonymous users

- For each domain, create a "Certs" shared folder, and shared it for Everyone to read, this is to import/export certs between the 2 domains

- For each domain, open CA console >> Properties of the CA >> Extensions tab >> and add an additional CRL to reads as follows:

file://

- Check all the options for this newly added CRL, and then restart the CA Service

- Run "copy C:\Windows\System32\CertSrv\CertEnroll\*crt C:/Certs" to copy all certificates to the shared folder for the other domain to access them

- Create a new GPO and go to Security Settings >> Public Key Policies >> Trusted Root Certification Authorities >> and navigate to the other domain to import all certificates that are on the Certs folder you previously shared

- On the server that is going to be running AD FS (should not be a DC), run mmc to open the Certificates console for Local Computer >> Personal >> Request New Certificate >> AD Enrolment Policy >> Certificate Properties >> DNS and enter the name of the FQDN of the AD FS

- Install the role AD FS, and ensure you click on the link at the end of the installation to start the AD FS Wizard, during the wizard you'll select the certificate for the server created on the previous step

- Edit the Global Authentication Policy in the AD FS Console

On the perimeter network you need to deploy a Web Application Proxy server that will communicate with the secured AD-joined Federated Server

Claims-based authentication; AD FS built tokens that contain claim data by using the following:

- Claim; the description of an object based on the object's attributes

- Claim rules; specifies how the Federation Server will interpret the claim

- Attribute store; this hosts the value used by claims; most of the time AD objects take the function of attributes stores

Relying-Party server; this is a server in the AD forest that hosts the resources a user in a partner organisation want to access. Relaying-party servers accept and validates the claim stored in the token issued by the Federation Server. The Claim Provider is the Federation Server in the forest that host the user account that wants access, and they issue claims to users in the form of digitally encrypted and signed tokens.

A relaying-party trust means that a specific claims-provider server trusts a specific relaying-party server

A claims-provider trust means that a relying-party trusts a specific claims provider, and they are configured on the Federation Server that functions as the relying party

Authentication Policies allow you to control how AD FS performs authentication. The methods supported by AD FS are the following:

- Forms Authentication; credentials are entered on a webpage, ideal for Intranet and Extranet clients

- Windows Authentication; only available to Intranet clients. Credentials are passed directly to AD FS if using IE, or users must provide their credentials on a pup-up dialogue box

- Certificate Authentication; this requires the user to already be provided with a certificate, either by installing it on a device or by providing it on a smart card

Workplace Join is a Windows Server 2012 feature that allows non-domain joined computer or devices to access organizational resources in a secure manner. Workplace Join support single-sing on, Windows 8.1 and IOS devices, and only applications that are claims-aware and use AD FS can use Workplace Join device registration information. You enable Workplace Join by running the following:

1) Initialize-ADFSDeviceRegistration -ServiceAccountName nazaudy\ADFS_NAZAUDY$

#user the $ sign at the end if you're using a gMSA

2) Enable-ADFSDeviceRegistration

#that 2nd step requires no argument

#After running the above PS, enable device authorization in the Global Authentication Policy

Users authenticate using their UPNs, so you'll need to map an external DNS server IP to either the Web Application Proxy server or the AD FS server itself, in order to support Workplace Join

Multi-factor authentication allows you to configure two separate methods of authentication, logon credentials as well as certificate or authentication application running on a mobile device. You configure multi-factor authentication either globally or on a per-relying-party trust basis. One method of providing multi-factor authentication is to use Microsoft Windows Azure Multi-Factor Authentication service

Active Directory Certificate Services (AD CS); nowadays certificates are as much part of a modern network as DHCP and DNS are. When you install the AD CS role on a server it makes it a Certification Authority (CA), and you can choose between the following deployment methods:

Enterprise Root CA; root CAs sign their own certificates, and an enterprise one is a member of AD that can issue certificates based on templates that you can configure. All members of AD will trust this CA, and it allows you to configure auto-enrolment, reducing that administrative overhead of managing certifications. Consider the following when deploying Enterprise Root CA

- Because of AD dependencies, they must remain online

- In organisation with more than 300 users, it is prudent to deploy an offline stand-alone CA and then use an enterprise subordinate CA for certificate deployment and management

- Enterprise root CAs can provide signing certificates to both enterprise subordinate and stand-alone subordinates CAs

- Though it is possible, avoid deploying more than one enterprise root CA on the forest

Enterprise Subordinate CA; they allow you to implement certificate enrolment, and can be used while root CAs are offline to increase security. You can configure multiple subordinate CAs in a forest, each of them to issue certificates based on different templates. Obviously, enterprise subordinate CA must be a member of AD and because of dependencies must remain online. Initially, the CA can only issue a limited number of templates, to do more you need to enable other templates using the Certification Authority console. You can create new certification templates by duplicating the existing ones

Stand-alone Root CA; not AD bounded, they can issue signing certificate to stand-alone subordinates CAs as well as enterprise subordinate CAs. In large environments, stand-alone CAs can be configured to function as offline root CAs. When configuring an offline root CA, you need to configure CRL (Certification Revocation List) and AIA (Authority Information Access) distribution points as well as a copy of the CA certificate that will be hosted in locations that remain online. A stand-alone root CA can only issues certificates based on a limited set of templates, and it does not directly support certificate enrolment or renewal. Certificates request and issuance must be performed manually

To verify the successful installation of CA from a client computer, run this on the client:

certutil -ping -config "fqdn.domain.local\computerCAname-ca01-ca"

Stand-alone subordinate CA; they are not AD bounded, and can only issue certificates from a limited number of non-editable certificate templates

Certificate Revocation List (CRLs) are lists of certificates that have been revoked by the administrator of an issuing CA; a CRL Distribution Point (CDP) is the location that hosts this list, location that is checked by clients to determine if the certificate they have is revoked or not. When you publish a CRL, the CA will write the list to the CDP location

Online Responders provide a streamlined way for clients to perform certification revocation: instead of checking the entire CRL list, the client simply check the Online Responder using a certificate identifier to determine whether that certificate is valid or not

CA Backup and Recovery; Using the CA console you can choose to backup the following:

- Private Key and CA Certificate, this will allow you to restore the CA to a new computer should the original host fail

- Certificate Database & Certificate Database Log

You can also perform a backup using the certutil utility

Revoke Certificates; after you revoke a certificate you should publish a new CRL or delta CRL, otherwise the certificate wil not be recognised as invalid by all clients because clients cache revocation checks for updated CRL or delta CLR based on the publication period of those lists

Certificate enrolment; to do it manually either use the CA console or the web-based enrolment if the CA if configured for that. To do it automatically, enable auto-enrolment by doing the following:

- Configure the GPO in the policy that applies to the security principal that wants to be automatically enrol

- Configure the security tab of the certificate enabling "autoenroll" for the user/group that will use that certificate

When configure, automatic renewal will occur at either 80% of the certificate's lifespan or the templated configured renewal period. You can also manually trigger re-enrolment from the CA Templates console by selecting the "Re-enrol All Certificates Holders" action for certificates deployed through auto-enrolment from an enterprise CA

Key archival and recovery; this allows you to recover the private key and is not enable by default. To enable it, you need to enrol at least one user with a certificate issue off a Key Recovery Agent (KRA) certificate template, this template is stored in AD. The user that holds the private key associated with the KRA Certificate can recover certificate private keys using the certutil command + getkey option + certificate serial number

Online Certificate Status Protocol (OCSP also known as Online Responder) checks the revocation of only specific certificates, rather than going through the thousands of certificates that a CRL list might have, the OCST only queries whichever certificate you submit. Ensure you click on "Manage Templates" >> OCSP Response Signing and add the CA computer name with the permission of "Read" and "Enrol" (do not autorenrol, because being part of a domain the "enrol" actually means auto-enroll)

Role Separation; to introduce security role separation into your CA, visit its properties >> Security tab and create groups according to the permissions that you can delegate in the Security tab, as follows:

- Read

- Issue and Manage Certificates

- Manage CA; cannot issue themselves certificates

- Request Certificates

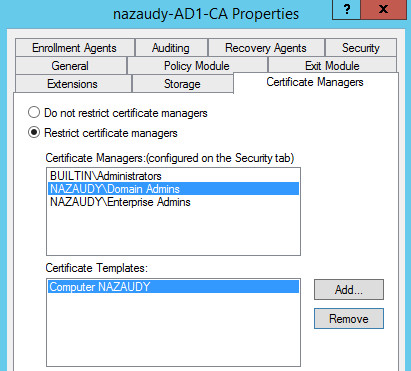

It is recommended to create separate groups for each of these roles and remove the current Administrators, Domain and Enterprise Admins that have those roles assigned by default. In that manner you can narrow the security and limit the danger of involving many users (even though they are admin) into the CA process. If an Admin account gets compromise, then CA is also compromise. Any account that has the permissions of "Issue and Manage Certificates" and/or "Request Certificates" will appear under the "Certificate Managers" if we decide to further the restrictions; that way we can designate specific groups to give specific templates (like on the example below Domain Admins giving only "Computer NAZAUDY" template certificates

Active Directory Rights Management Services (AD RMS); it allows you to control who is able to access and distribute documents without worrying to apply file & folder permissions. You can use AD RMS for example to restrict users from emailing documents as attachments to unauthorised third parties, from copying or changing or from accessing files from insecure locations (like copying encrypted files to USBs). The AD RMS Root Cluster (the 'cluster' name here has not nothing to do with failover or load-balancing) manages all the licensing and all the AR RMS certificate traffic for an AD, there can only be one AD RMS per forest. The types of certificates used in AD RMS are not the PKI certificates that we are used to, they are in certs created with XRML language. When deploying the AD RMS, consider the following:

- AD RMS requires a domain service account to run (no need to be Domain Admin!!!); you should use a gMSA (supported in Windows Server 2012) so that the password is managed by AD

- Select between 2 cryptographic modes; mode 1 is weaker (1024 bit and SHA-1 hashes) while mode 2 is stronger and more secure (2048 bits and SHA-256 hashes)

- Choose a cluster key storage location

- Input a cluster key password (if you lose.... forget about recovering anything)

- Specify the cluster address in FQDN format; you should have already installed a web server certificate with the FQDN of that host server

- Choose a licensor certificate name that represents the certificate functionality

- Choose whether to register the Service Connection Point (SCP) in AD or not; the SCP allow clients to locate the AD RMS cluster. The AD RMS SCP is registered during AD RMS deployment if you installa AD RMS with an account that is a a member of the Enterprise Admins group. At any time, you can change the SCP location by editing the cluster properties in the AD RMS Console

RMS Templates; you use the AD RMS Console to create rights policy templates, enabling rights on a per-user or per-group basis. AD RMS requires that each security principal has an associated email address. a rights policy template must be distributed to a client (either manually or by GPO) before a user can apply the template to protect content. If a users is a member of multiple groups, remember that rights are accumulative

- Content expiration allows you to protect the content after a certain number of days or after a certain date

- License expiration means that the AD RMS client must make a connection back to the AD RMS server to obtain a new license even though the content has not expired

Exclusion policies; you use exclusion policies to block specific entities (such as applications, users an lockbox versions) from interacting with AD RMS

AD RMS users 3 x databases, each of which must be backup, either SQL or Windows Internal Database, these are the 3 goodies:

- Configuration Database

- Directory Services Database

- Logging Database

To restore AD RMS, first restore the 3 databases, the reinstall the AD RMS role and choose to Join an Existing Cluster

References

- https://techcommunity.microsoft.com/t5/failover-clustering/tuning-failover-cluster-network-thresholds/ba-p/371834

- https://ravichaganti.com/blog/reboot-it-bangalore-2014-session-slides-whats-new-in-server-2012-r2-hyper-v/

- https://docs.microsoft.com/en-us/powershell/module/msonlinebackup/?view=msonlinebackup-ps

- https://www.tomsguide.com/uk/us/winpe-winre-bootable,review-1191.html

- https://docs.microsoft.com/en-us/previous-versions/windows/it-pro/windows-server-2012-R2-and-2012/dn280939(v=ws.11)?redirectedfrom=MSDN

- https://www.wireshark.org/tools/oui-lookup.html

- https://gallery.technet.microsoft.com/scriptcenter/Windows-Server-2012-R2-15d16e82/view/Discussions

- https://docs.microsoft.com/en-us/previous-versions/windows/it-pro/windows-server-2008-R2-and-2008/cc732590(v=ws.11)?redirectedfrom=MSDN

- https://docs.microsoft.com/en-us/previous-versions/windows/it-pro/windows-server-2008-r2-and-2008/ff961506(v=ws.10)

- https://www.simonlong.co.uk/blog/2008/12/12/microsoft-nlb-error-when-trying-to-cluster-cloned-vm%E2%80%99s/

Comments powered by CComment